Video game security has always been a moving target, as consoles evolved to full-blown computing platforms locked down with layers of protection — but for every lock ever invented, there has always been someone determined to pick it.

Being a video game fan myself, I never gave much thought to any of this until I became an engineer and started diving into security. Consoles are embedded systems at their core, and the history of how their security was built, broken, and rebuilt is full of lessons that apply well beyond our living rooms.

In this article, we will walk through the history of video game console security, from the early days when there was virtually no protection at all, through decades of increasingly sophisticated defenses, and up to modern systems that use many of the same techniques found in security-sensitive embedded devices — while showing how, despite all of that, researchers and enthusiasts kept finding ways in.

I will focus primarily on home consoles rather than handheld systems, with the Nintendo Switch included as an exception because it sits at the intersection of both categories. To keep this article to a reasonable length, I will not attempt to cover every published vulnerability or exploit. Instead, I will focus on some of the most representative and popular examples. For the same reason, I will not go into full technical detail on every attack, but I will provide references and links where possible for readers who want to dig deeper.

My goal is to show how security evolved across the video game industry and what we can learn from that evolution. In the end, whether we are designing a video game console, a medical device, or an industrial system, the threat model may differ, but many of the underlying security challenges are remarkably similar.

So let’s dive in!

The wild west: when there was no lock on the door

The earliest home consoles, like the Atari 2600 (1977), had essentially no security. The hardware had no mechanism to verify whether a cartridge contained legitimate software. Any ROM chip wired to the right connector would run. The only real barrier was physical and economic: you needed the hardware to manufacture a cartridge.

Source: Wikipedia

Source: Wikipedia

The Atari 2600 era was the wild west of gaming. There was no code signing, no cryptographic verification, and no intentional region-locking scheme — though regional NTSC/PAL/SECAM differences still affected compatibility.

Third-party publishers like Activision were famously founded by Atari engineers who left to make their own games, because nothing technically stopped them from doing so. Atari’s only option was legal action, not technical controls.

This changed with the arrival of the Nintendo Entertainment System (NES) in 1985, which introduced the first serious attempt at hardware-enforced software control. How did that work, and how long did it last?

Hardware lockout chips and the 10NES

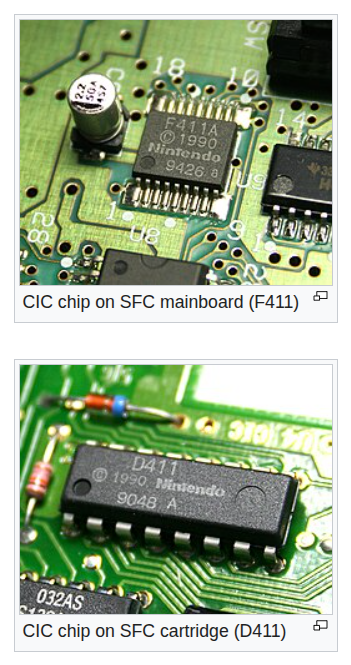

Scarred by the 1983 North American video game crash, partly caused by a market flooded with poor-quality titles, Nintendo shipped the NES with a dedicated security IC called the 10NES (later known as the CIC, or Checking Integrated Circuit), present in both the console and every licensed cartridge.

Source: Wikipedia

Source: Wikipedia

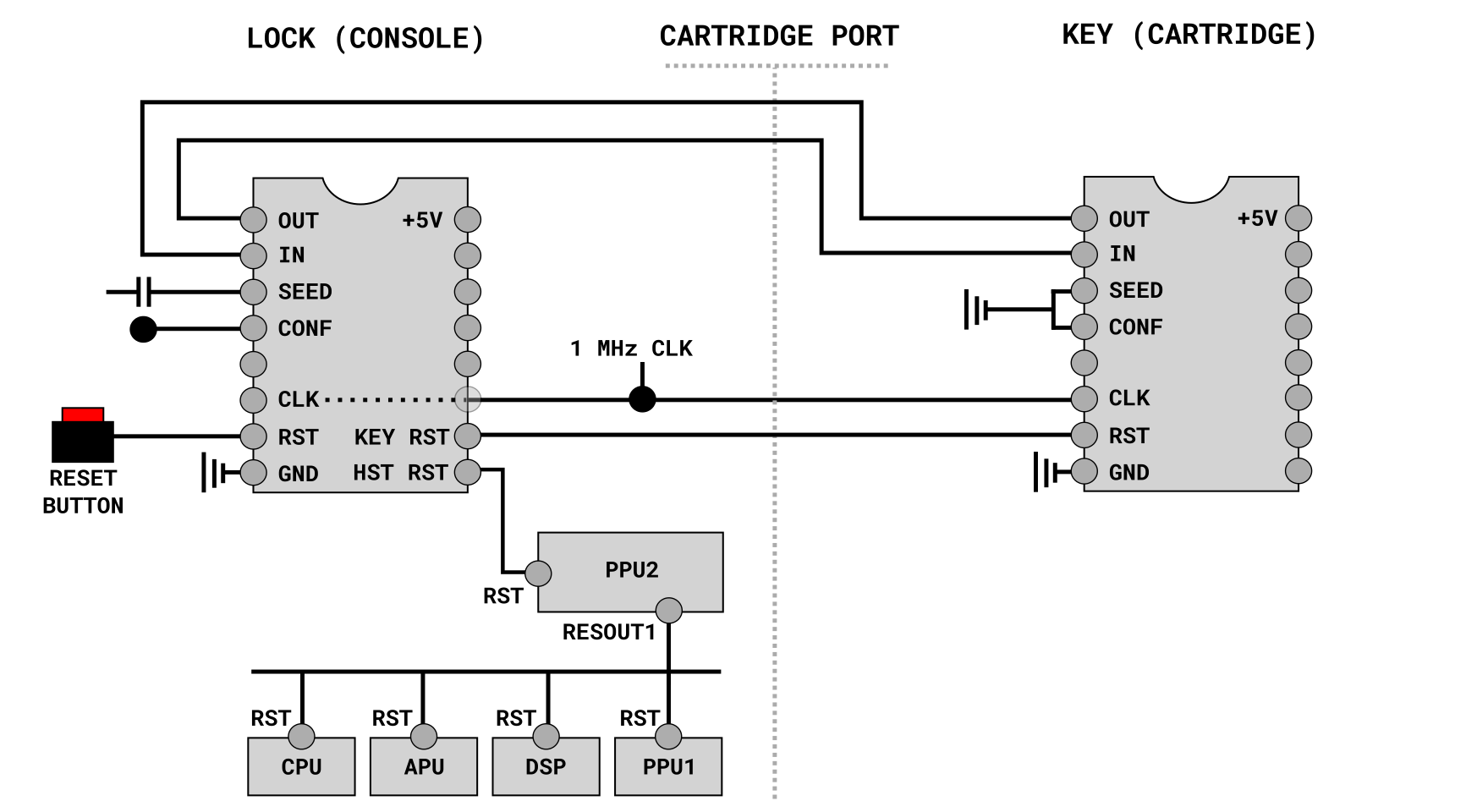

The chip was a challenge-response authentication system: a 10NES chip inside the console would communicate with a matching 10NES chip inside every licensed cartridge. If the authentication handshake failed, the console would continuously reset (once per second), producing the infamous blinking screen.

Source: Fabien Sanglards’s website

Source: Fabien Sanglards’s website

Both chips were identical 4-bit microcontrollers (Sharp SM590) running the same firmware, but operating in different roles, configured via the CONF pin in the schematics above. The console chip acted as the master (CONF=1), the cartridge chip as the slave (CONF=0).

On power-on, the two chips synchronized over a shared clock and data line and began exchanging a pseudo-random bit sequence generated by an internal shift register. The console chip drove the clock and continuously compared the cartridge chip’s output against its own expected sequence. As long as both chips stayed in sync - meaning the cartridge contained a genuine 10NES chip running the same program - authentication succeeded and the console booted normally. If the sequences diverged at any point, the console chip pulled the reset line low, causing the reset (and blinking screen).

Crucially, there was no secret key involved — both chips ran the exact same algorithm, and the security relied entirely on that algorithm being unknown and difficult to extract from silicon. This is security through obscurity in its purest form!

It was also the first widespread use of hardware-based software authentication in a consumer device. And it effectively locked out unlicensed cartridges - at least for a while, because the 10NES was reverse-engineered relatively quickly…

Tengen, Atari’s game publishing subsidiary, famously reverse-engineered the chip and manufactured their own clone (Rabbit) to produce unlicensed NES cartridges. Yes, you read that right, Atari hacked Nintendo! This led to the landmark lawsuit Atari Games Corp. v. Nintendo of America (1992).

The homebrew community, meanwhile, discovered that physically disabling the CIC chip by cutting specific pins on the chip inside the console would prevent it from pulling the reset line low, allowing the console to boot regardless of what cartridge was inserted.

Some unlicensed manufacturers went further, using a voltage spike to shut down the CIC mid-authentication before it could pull the reset line — an early, crude example of a fault injection attack, the same class of hardware technique that would later be used to defeat the Xbox 360’s cryptographic boot chain.

Nintendo carried the CIC approach across three generations of cartridge-based consoles — the NES, the Super NES, and the Nintendo 64 — refining the chip with each iteration to make it harder to clone. But the fundamental weakness never change, as the authentication was purely based on the physical presence of a compatible chip, with no cryptographic verification of the actual game code. Once you understood the protocol, you could clone or bypass the chip. It was only with the GameCube (2001), which abandoned cartridges for proprietary miniDVDs, that Nintendo finally left the CIC model behind.

This era established a pattern that would repeat itself for decades: a security measure based on obscurity or hardware availability, followed by reverse engineering, followed by a bypass. How did the transition to optical media change the game?

Optical discs and the birth of the modchip

The PlayStation (1994) moved from cartridges to CD-ROMs, and Sony knew that increasingly affordable CD-R drives made piracy a serious threat. So it created a copy-protection scheme centered on two linked ideas: the drive firmware looked for a special region-specific SCEx authentication signal encoded in a non-standard wobble area near the inner part of the disc and the disc’s region/licensing identifiers had to match the console’s market. In essence, the whole protection relied on a custom media format that ordinary consumer CD writers could not reproduce.

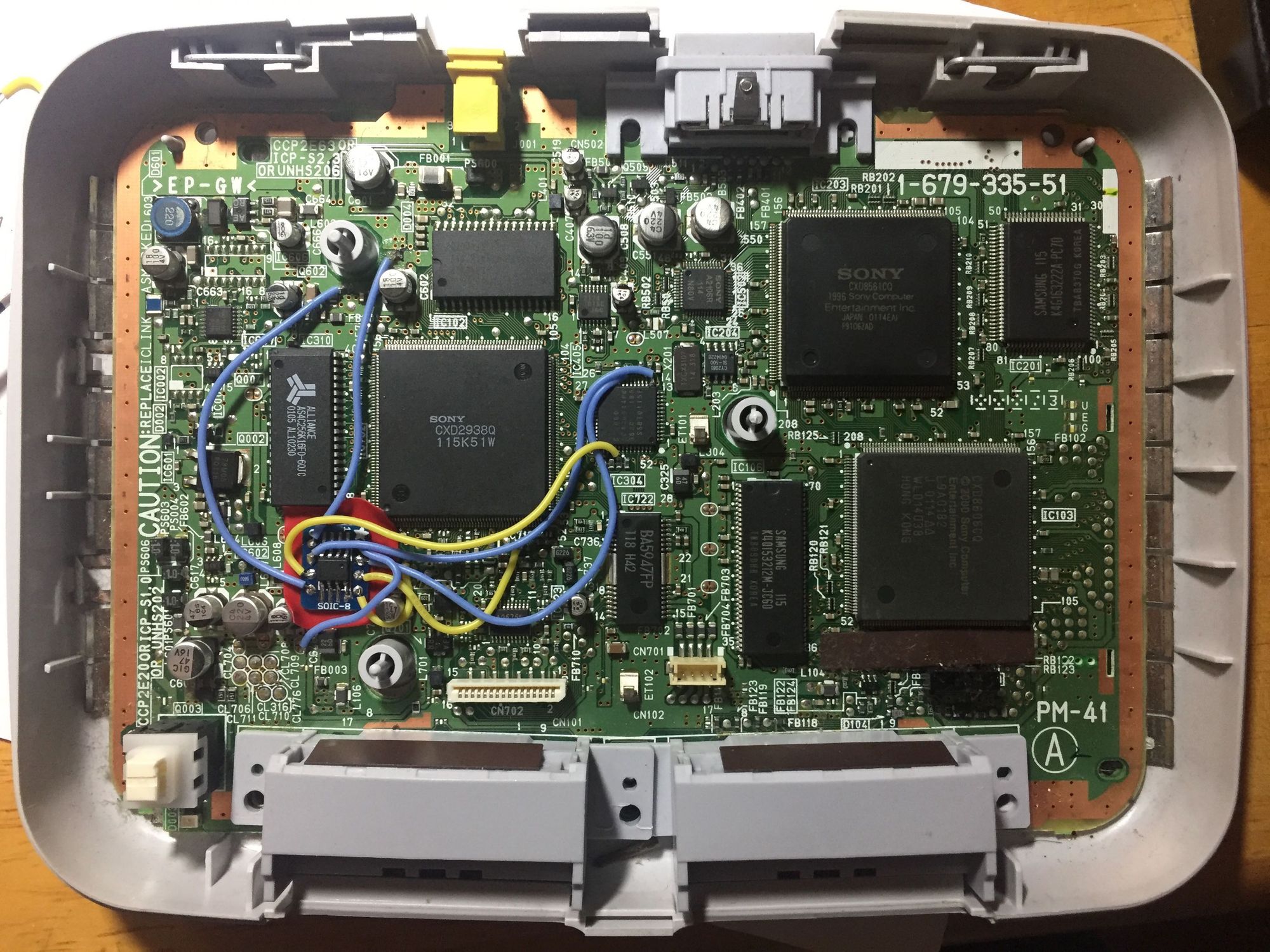

This introduced a new attack surface and helped popularize a new class of hardware modifications known as modchips. A typical PlayStation modchip was a small microcontroller soldered onto the motherboard that spoofed the SCEx authentication sequence, allowing the drive to accept copied or out-of-region discs.

Source: Kevin Chung’s blog

Source: Kevin Chung’s blog

Dozens of modchip designs appeared within a year of the console’s launch, many requiring nothing more than soldering a few wires to specific pads on the board.

Beyond modchips, the PlayStation was also vulnerable to the infamous swap trick: boot with a legitimate disc, hold the lid sensor open, and swap in a burned copy after the initial authentication check.

The broader lesson is that the PlayStation’s main protection lived at the disc-authentication layer, with no cryptographic verification of the code being loaded into memory. The CPU would execute whatever the disc contained. Bypassing the disc check was sufficient to run anything.

The PlayStation 2 (2000) raised the bar slightly with a more robust drive authentication scheme, but it was ultimately still defeatable with modchips and the swap trick. This disc-only approach to security was not unique to Sony: the Sega Saturn relied on similar disc-based authentication, and even the Nintendo GameCube used a proprietary miniDVD format as its primary barrier. None of these consoles attempted to cryptographically verify the code they executed — protection stopped at the optical drive. Get past the disc check, and the CPU asked no further questions.

The Sega Dreamcast deserves a separate mention, as its protection did not rely solely on a simple disc-authentication check. It combined the proprietary GD-ROM format, support for the MIL-CD format, and an executable scrambling scheme. In practice, the console was compromised when enthusiasts learned to abuse the MIL-CD boot path and reproduce the scrambling logic, allowing software to boot from ordinary CD-Rs.

In the end, protecting the media was never going to be enough. The next step was to protect the code itself.

Cryptographic code signing enters the console

The original Xbox (2001) was built on familiar PC hardware (Pentium III derivative, Intel GPU, standard hard drive), running a stripped-down OS derived from Windows 2000. It was one of the earliest major home consoles to implement a cryptographic chain of trust rooted in a hidden boot block inside the MCPX. That boot block decrypted and verified an external bootloader, which in turn decrypted and verified the kernel. The kernel then enforced signature checks on the software it loaded.

Andrew “bunnie” Huang was one of the earliest and most influential researchers to reverse-engineer the Xbox security architecture, documenting his work in a 2002 MIT memo and later in Hacking the Xbox: An Introduction to Reverse Engineering — and yes, it is free to download, so go grab a copy! 😉

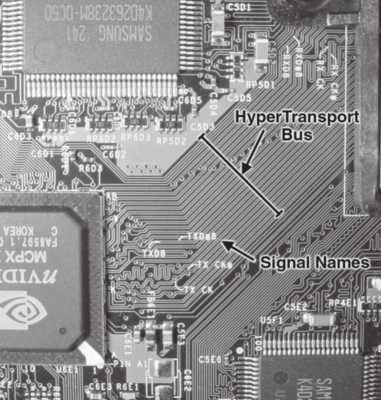

He showed that the Xbox’s hidden boot ROM could be recovered by tapping the HyperTransport bus, because the boot code traversed that bus in the clear. Doing so did not require decapping the chip, but it did require custom FPGA-based bus-sniffing hardware and physical access to the motherboard.

Source: Hacking the Xbox

Source: Hacking the Xbox

Recovering the boot ROM broke the secrecy of the console’s hardware root of trust: researchers could now reconstruct the bootloader decryption logic and key material, enabling deeper analysis of the boot chain and ultimately making it possible to create alternative boot code, such as Cromwell.

Code signing raised the bar significantly, but it does not protect against memory-safety bugs in the signed code itself!

Buffer overflows and a horse named Epona

Save files turned out to be a surprisingly effective attack vector. Several original Xbox games — MechAssault, Splinter Cell, and 007: Agent Under Fire — had save file parsers that failed to validate input length. A crafted save file could overflow a buffer and gain code execution within the game process. And because Xbox games ran in kernel mode, a single exploitable game meant full system control.

These attacks became known as softmods.

The Nintendo Wii (2006) repeated the same pattern. The Twilight Hack exploited a stack buffer overflow in The Legend of Zelda: Twilight Princess, triggered by a crafted save file containing an overlong name for Epona, Link’s horse. That exploit allowed the Wii to execute homebrew code from the SD card, and it became one of the earliest widely used paths to installing the Homebrew Channel. Later, more advanced tools such as BootMii pushed control even deeper into the boot process.

The lesson was clear: securing the boot chain while leaving trusted application free to parse untrusted input unsafely just shifts the attack surface. The seventh generation of consoles tried to address this more systematically — but introduced new problems of their own.

When secure boot fails

The seventh generation of consoles — the PlayStation 3 (2006), Xbox 360 (2005), and Wii (2006) — all made heavy use of asymmetric cryptography to enforce code signing. Only software signed with the manufacturer’s private key would be accepted for execution. The theory was sound. The implementation, not so much.

The “JTAG/SMC Hack” was one of the first and most sophisticated attacks on the Xbox 360’s secure boot implementation, chaining multiple attack surfaces to achieve unsigned code execution. The attack involved reflashing the NAND with a crafted image containing a modified SMC firmware, an old bootloader, and an old vulnerable kernel, then using an exposed JTAG interface to redirect execution into that old vulnerable kernel.

Microsoft’s key design mistakes were leaving a JTAG debug interface exposed and enabled for a small window during the boot process, implicitly trusting the SMC firmware as an unverified component outside the secure boot chain, and providing weak rollback protection that allowed downgrade attacks. This attack vector was closed later with a software update, introducing stronger downgrade protections and preventing the console from booting the old vulnerable kernel.

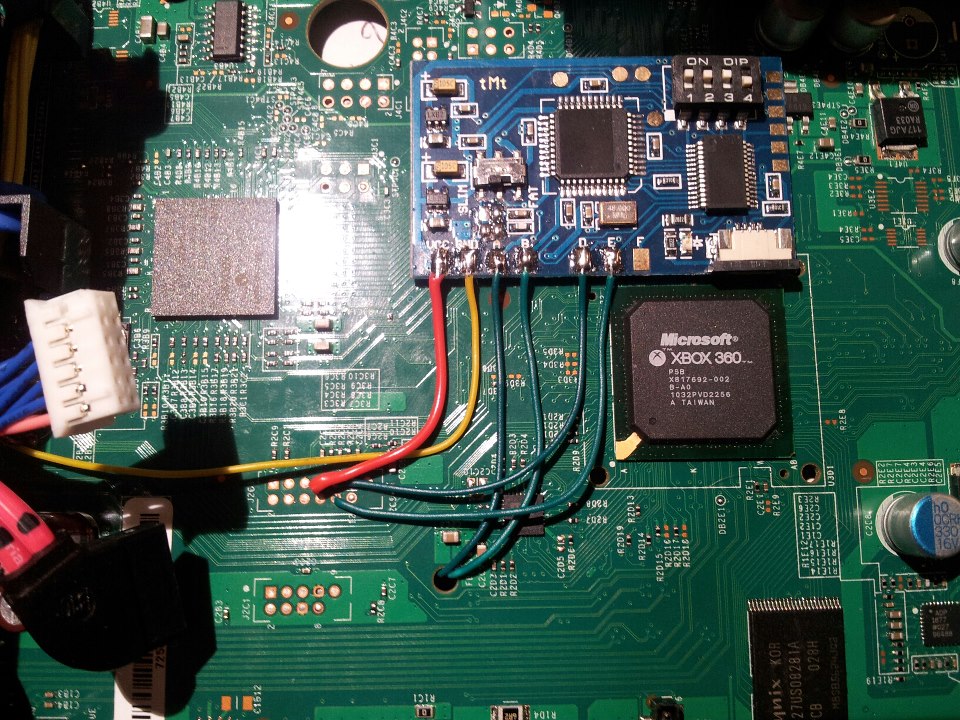

A more fundamental hardware attack followed in 2011: the Reset Glitch Hack (RGH), developed by GliGli and Tiros. RGH used a precisely timed reset glitch — typically combined with temporarily slowing the CPU clock — to skip the bootloader hash comparison, allowing a modified next-stage bootloader to run. A classic fault-injection attack, reproducible with cheap hardware (picture below), that could not be fixed in software. Microsoft addressed it only in later hardware revisions of the console.

Source: Le blog de Chip’n Modz

Source: Le blog de Chip’n Modz

The PlayStation 3 story is even more instructive from a cryptographic standpoint. Sony used ECDSA to sign critical PS3 software, and ECDSA requires a fresh, unpredictable nonce for each signature. Reuse that nonce, and the private signing key can be recovered algebraically from signatures alone. Sony’s failure went beyond using weak random values — the implementation used a constant: the same fixed number as the nonce in every single signature. That is what the fail0verflow security research group revealed at 27C3’s talk titled “PS3 Epic Fail,” in December 2010, making key recovery straightforward from any two signatures.

Researcher George Hotz (known as geohot) subsequently published Sony’s private signing key publicly, making it possible to sign arbitrary code as legitimate on PS3 consoles that trusted that key. Sony sued and later settled, but the problem was fundamentally hard to unwind because the key was embedded into the root-of-trust implementation and could not be easily revoked!

This incident became one of the best-known examples of a catastrophic cryptographic implementation failure. The algorithm was sound, but the implementation was not. Random numbers are so important in different areas of computer science, including (and especially) cryptography. To learn more about it, have a look at my introductory article on random numbers.

In the end, this has been a learning process for both attackers and designers. The attacks keep pushing boundaries, and designers learn from mistakes to improve security. But did that really improve on the latest console releases?

More security is better security?

The original Nintendo Switch (2017) was based on Nvidia’s Tegra X1, whose secure-boot root of trust was implemented in BootROM code, as is common in modern SoCs. In 2018, security researcher Kate Temkin and the ReSwitched team disclosed fusée gelée (CVE-2018-6242), a buffer overflow in the bootROM’s USB Recovery Mode handler.

Triggering the exploit requires only a piece of wire to short two pins on the right Joy-Con connector (to enter RCM mode) and a USB connection to a PC or a small dongle. By sending an oversized USB control transfer, an attacker could overflow the buffer, overwrite the return address of the RCM handler on the stack, and redirect execution to arbitrary code in SRAM, before any signature verification took place.

Because the vulnerability is in mask ROM, Nintendo cannot patch it on the millions of Switch consoles already in the field. Any Switch with the original Tegra X1 chip is permanently vulnerable. Nintendo had to fix it in later hardware revisions such as Tegra X1+ (codenamed Mariko).

Out of curiosity, there are other examples of vulnerabilities in the root-of-trust implementation from the embedded industry, like the one found on NXP iMX6 chips.

On the PlayStation 4 (2013) side, no public break of the hardware root of trust is widely documented as of this writing, but several remote code execution exploits have been published that allow unauthorized software to run after normal boot.

One well-known example used a WebKit vulnerability to gain code execution in the browser process, followed by a kernel exploit to escape the sandbox and achieve kernel-level control. Another major attack, PPPwn, targeted the console’s Ethernet/PPPoE networking stack and exploited a vulnerability in the PPPoE implementation to achieve remote kernel code execution.

Because these were software-layer attacks rather than public breaks of the hardware root of trust, Sony could mitigate them through firmware updates. Over time, newer firmware versions reduced the exposed attack surface and closed many of the publicly known exploit paths.

Now, after three decades of hacks, exploits, glitches, and key recoveries — what can we learn from it?

Lessons from three decades of console security

If you look at a modern console — the PS5 or the Xbox One and Xbox Series — it has all the mitigations you would expect from a well-designed secure embedded device: secure boot, full disk encryption, isolation via hypervisors, a robust update system to patch vulnerabilities, and more. And yet, given enough resources and motivation, sufficiently complex systems are eventually exploited.

For example, there are publicly documented vulnerabilities and exploit chains for PS5. Two well-known examples are a BD-J exploit chain reported via HackerOne that chained five bugs to execute arbitrary payloads, and Byepervisor, a public PS5 hypervisor exploit for firmware 1.xx–2.xx.

For modern Xbox platforms, public 2024 work exposed SystemOS kernel exploitation on both Xbox One and Xbox Series, while the 2026 Bliss boot-ROM voltage-glitch attack was publicly presented for the original Xbox One.

Over time, console vendors learned that technical mitigations alone are not enough. So they added service lock-in as a complementary measure. For example, if you jailbreak a PS4, you lose access to the PlayStation Network. For users who value online play, that cost outweighs any benefit from running unsigned code.

In the end, the lesson is simple: security is not a feature you add to a product; it is a property of its architecture. The products with the most durable security are those built around two fundamental principles: defense in depth and security by design, applied in a way that is consistent with the system’s threat model.

I hope you enjoyed this journey through the history of video game console security — I certainly had fun writing it.

Please email your comments or questions to hello at sergioprado.blog, or sign up the newsletter to receive updates.